Scientists in Munich have completed the first detailed simulation of the Sumatra earthquake that triggered a devastating tsunami on the day after Christmas in 2004. The results offer new insights into the underlying geophysical processes.

The Christmas 2004 Sumatra-Andaman earthquake was one of the most powerful and destructive seismic events in history. It triggered a series of tsunamis, killing at least 230,000 people. The exact sequence of events involved in the earthquake remains unclear.

A deeper understanding of the geophysical processes involved is now at hand, thanks to a simulation performed by a team of geophysicists, computer scientists and mathematicians from the Technical University of Munich (TUM) and LMU Munich on the SuperMUC supercomputer at the Leibniz Supercomputing Center (LRZ) of the Bavarian Academy of Sciences. This largest-ever rupture dynamics simulation of an earthquake could facilitate the development of more reliable early warning systems. The results of the simulation will be presented at the International Conference on High-Performance Computing, Networking, Storage and Analysis (SC 17) in Denver, Colorado, which began on November 12th.

Precise forecasting is practically impossible

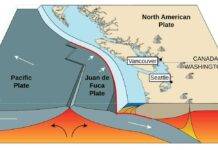

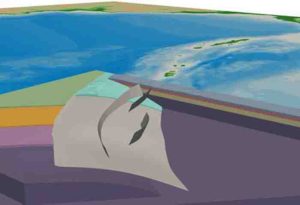

In subduction zones – locations where tectonics plates meet at seams in the Earth’s crust, with one plate moving below the other – earthquakes occur at regular intervals. However, it is not yet precisely known under what conditions such “subduction earthquakes” can cause tsunamis or how big such tsunamis will be.

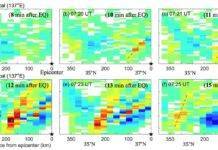

Earthquakes are highly complex physical processes. In contrast to the mechanical processes occurring at the rupture front, which take place on a scale of a few meters at most, the entire Earth’s surface rises and falls over an area of hundreds of square kilometers. During the Sumatra Earthquake, the tear in Earth’s crust extended for more than 1,500 km (approximately equivalent to the distance from Munich to Helsinki or Los Angeles to Seattle) – the longest rupturing fault ever seen. Within 10 minutes, the seafloor was vertically displaced by the earthquake by as much as 10 meters.

Simulation with over 100 billion degrees of freedom

To simulate the entire earthquake, the scientists covered the area extending from India to Thailand with a three-dimensional mesh consisting of over 200 million elements and incorporating more than 100 billion degrees of freedom.

The size of the elements varied according to the required resolution: A much finer mesh was used along the fault in order to resolve the complex frictional processes, and on the surface so as to take into account the topographical features and the relatively low-velocity seismic waves found there. In areas with little complexity and fast waves, a coarser mesh was employed.

To calculate the pattern of seismic wave propagation, more than three million time steps had to be computed over the smallest elements. As input data, the team used all available information on the geological structure of the subduction zone and the initial conditions on the seafloor, as well as laboratory experiments on rock fracturing behavior.

In addition to the large so-called megathrust plate boundary, the scientists considered three smaller splay faults, or branching faults, suspected of having strongly impacted the tsunami-triggering deformation of the ocean floor.

Almost 50 trillion operations

“To make it possible to finish the simulation on SuperMUC within a reasonable period of time, it ultimately took five years of preparations to optimize our SeisSol earthquake simulation software. Just two years ago, the computing time for the simulation would have been 15 times longer,” explains Michael Bader, a professor of informatics at TUM.

All of the algorithmic components, from data input and output and the numerical algorithms used to solve the physical equations through to the parallel implementation on thousands of multicore processors, had to be optimized for the SuperMUC.

The Sumatra simulation still took almost 14 hours of processing time on all 86,016 cores of the SuperMUC, which performed nearly 50 trillion operations (almost 1015 operations per second, or around 1 petaflop/s – one-third of the theoretical maximum computing performance).

The largest and longest earthquake simulation ever performed

“We successfully completed the largest earthquake simulation of itskind ever seen,” says LMU geophysicist Dr. Alice-Agnes Gabriel. “With a duration of around eight minutes, it is also the longest. On top of that, it was the first-ever physics-based scenario for a real subduction rupture process. With the simultaneous calculation of the complicated fracture of several fault segments and the subsurface propagation of seismic waves, we gained exciting insights into the geophysical processes of the earthquake.”

In particular, says Dr.Gabriel, “The splay faults, which can be imagined as pop-up fractures alongside the known subduction trench, led to abrupt, long-period, vertical displacements of the seafloor, and thus to an increased tsunami risk. At present, this capability of incorporating such realistic geometries into physical earthquake models is unique worldwide.”

Note: The above post is reprinted from materials provided by Ludwig Maximilian University of Munich.